geek

- Since I’m currently on my fast home Internet, that download will probably last about 20 seconds.

- I have a fast SSD, so the “Extracting files” step might be 6 seconds long.

- “Copying files into place” will run at about the same speed, for another 8 second.

- My shiny new CPU can chew through 100 CPU units in 10 seconds.

- URL (the attacker will have this)

- character set (dropdown gives you 6 choices)

- which of nine hash algorithms was used (actually 13 — the FAQ is outdated)

- modifier (algorithmically, part of your password)

- username (attacker will have this or can likely guess it easily)

- password length (let’s say, likely to be between 8 and 20 chars, so 13 options)

- password prefix (stupid idea that reduces your password’s complexity)

- password suffix (stupid idea that reduces your password’s complexity)

- which of nine l33t-speak levels was used

- when l33t-speak was applied (total of 28 options: 9 levels each at three different “Use l33t” times, plus “not at all”)

- Download VirtualBox. I used version 4.1.4. The version available to you today might look different but should work mostly the same way.

- Open the “VirtualBox-[some-long-number]-OSX.dmg” disk image.

- Double-click the “VirtualBox.mpkg” icon to run the installer.

- Click “Continue”.

- Click “Continue”.

- Click “Install”.

- Enter your password and click “Install Software”.

- When it’s finished copying files, etc., click “Close”.

- Download the FreeDOS “Base CD” called “fdbasecd.iso”. Note: the first mirror I tried to download from didn’t work. If that happens, look around on the other mirrors until you find one that does.

- Open your “Applications” folder and run the “VirtualBox” program.

- Click the “New” button to create a new virtual machine. This launches the “New Virtual Machine Wizard”. Click “Continue” to get past the introduction.

- Name your new VM something reasonable. I used “FreeDOS”, and whatever name you enter here will appear throughout all the following steps so you probably should, too.

- Set your “Operating System” to “Other”, and “Version” to “DOS”. (If you typed “FreeDOS” in the last step, this will already be done for you.) Continue.

- Leave the “Base Memory Size” slider at 32MB and continue.

- Make sure “Start-up Disk” is selected, choose “Create new hard disk”, and continue.

- Select “File type” of “VDI (VirtualBox Disk Image)” and continue.

- Select “Dynamically allocated” and continue.

- Keep the default “Location” of “FreeDOS”.

- Decision time: how big do you want to make your image? The full install of FreeDOS will take about 7MB, and you’ll want to leave a little room for your own files. On the other hand, the larger you make this image, the longer it’ll take to copy onto your USB flash drive. You certainly don’t want to make it so large that it won’t actually fit on your USB flash drive. An 8GB nearly-entirely-empty image will be worthless if you only have a 2GB drive. I splurged a little and made my image 32MB (by clicking in the “Size” textbox and typing “32MB”. I hate size sliders.). Click “Continue”.

- Click “Create”.

- Make sure your new “FreeDOS” virtual machine is highlighted on the left side of the VirtualBox window.

- On the right-hand side, look for the section labeled “Storage” and click on the word “Storage” in that title bar.

- Click the word “Empty” next to the CD-ROM icon.

- Under “Attributes”, click the CD-ROM icon to open a file chooser, select “Choose a virtual CD/DVD disk file…”, and select the FreeDOS Base CD image you downloaded at the beginning. It’ll probably be in your “Downloads” folder. When you’ve selected it, click “Open”.

- Back on the “FreeDOS — Storage” window, click “OK”.

- Back on the main VirtualBox window, near the top, click “Start” to launch the virtual machine you just made.

- A note about VirtualBox: when you click the VM window or start typing, VirtualBox will “capture” your mouse cursor and keyboard so that all key presses will go straight to the VM and not your OS X desktop. To get them back, press the left [command] key on your keyboard.

- At the FreeDOS boot screen, press “1” and [return] to boot from the CD-ROM image.

- Hit [return] to “Install to harddisk”.

- Hit [return] to select English, or the up and down keyboard arrow keys to choose another language and then [return].

- Hit [return] to “Prepare the harddisk”.

- Hit [return] in the “XFDisk Options” window.

- Hit [return] to open the “Options” menu. “New Partition” will be selected. Hit [return] again. “Primary Partition” will be selected. Again, [return]. The maximum drive size should appear in the “Partition Size” box. If not, change that value to the largest number it will allow. Hit [return].

- Do you want to initialize the Partition Area? Yes. Hit [return].

- Do you want to initialize the whole Partition Area? Oh, sure. Press the left arrow key to select “YES”, then hit [return].

- Hit [return] to open the “Options” menu again. Use the arrow keys to scroll down to “Install Bootmanager” and hit [return].

- Press [F3] to leave XFDisk.

- Do you want to write the Partition Table? Yep. Press the left arrow to select “YES” and hit [return]. A “Writing Changes” window will open and a progress bar will scroll across to 100%.

- Hit [return] to reboot the virtual machine.

- This doesn’t actually seem to reboot the virtual machine. That’s OK. Press the left [command] key to give the mouse and keyboard back to OS X, then click the red “close window” button on the “FreeDOS [running]” window to shut it down. Choose “Power off the machine” and click “OK”.

- Back at the main VirtualBox window, click “Start” to re-launch the VM.

- Press “1” and [return] to “Continue to boot FreeDOS from CD-ROM”, just like you did before.

- Press [return] to select “Install to harddisk” again. This will take you to a different part of the installation process this time.

- Select your language and hit [return].

- Make sure “Yes” is selected, and hit [return] to let FreeDOS format your virtual disk image.

- Proceed with format? Type “YES” and hit [return]. The format process will probably finish too quickly for you to actually watch it.

- Now you should be at the “FreeDOS 1.0 Final Distribution” screen with “Continue with FreeDOS installation” already selected. Hit [return] to start the installer.

- Make sure “1) Start installation of FreeDOS 1.0 Final” is selected and hit [return].

- You’ll see the GNU General Public License, version 2 text. Follow that link and read it sometime; it’s pretty brilliant. Hit [return] to accept it.

- Ready to install the FreeDOS software? You bet. Hit [return].

- Hit [return] to accep the default installation location.

- “YES”, the above directories are correct. Hit [return].

- Hit [return] again to accept the selection of programs to install.

- Proceed with installation? Yes. Hit [return].

- Watch in amazement and how quickly the OS is copied over to your virtual disk image. Hit [return] to continue when it’s done.

- The VM will reboot. At the boot screen, press “h” and [return] to boot your new disk image. In a few seconds, you’ll see an old familiar “C:" prompt.

- Press the left [command] key to release your keyboard and mouse again, then click the red “close window” icon to shut down the VM. Make sure “Power off the machine” is selected and click “OK”.

- Open a Terminal.app window by clicking the Finder icon in your dock, then “Applications”, then opening the “Utilies” folder, then double-clicking “Terminal”.

- Copy this command, paste it into the terminal window, then hit [return]:

- Plug your USB flash drive into your Mac.

- If your Mac can’t the drive, a new dialog window will open saying “The disk you inserted was not readable by this computer.” Follow these instructions:

- Click “Ignore”.

- Go back into your terminal window and run this command:

diskutil list - You’ll see a list of disk devices (like “/dev/disk2”), their contents, and their sizes. Look for the one you think is your USB flash drive. Run this command to make sure, after replacing “/dev/disk2” with the actual name of the device you picked in the last step:

diskutil info /dev/disk2

- Make sure the “Device / Media Name:” and “Total Size:” fields look right. If not, look at the output of

diskutil listagain to pick another likely candidate and repeat the step until you’re sure you’ve picked the correct drive to complete eradicate, erase, destroy, and otherwise render completely 100% unrecoverable. OS X will attempt to prevent you from overwriting the contents of drives that are currently in use — like, say, your main system disk — but don’t chance it. Remember the name of this drive! - If your Mac did read the drive, it will have automatically mounted it and you’ll see its desktop icon. Follow these instructions:

- Go back into your terminal window and run this command:

diskutil list - Look for the drive name in the output of that command. It will have the same name as the desktop icon.

- Look for the name of the disk device (like “/dev/disk2”) for that drive and remember it (with the same warnings as in the section above that you got to skip).

- Unmount the drive by running this command:

diskutil unmount "/Volumes/[whatever the desktop icon is called]" - This is not the same as dragging the drive into the trash, so don’t attempt to eject it that way.

- Go back into your terminal window and run this command:

- Go back to your terminal window.

- Run these commands, but substitute “/dev/fakediskname” with the device name you discovered on the previous section:

cd ~/Desktop; sudo dd if=freedos.img of=/dev/fakediskname bs=1m - After the last command finishes, OS X will automatically mount your USB flash drive and you’ll see a new “FREEDOS” drive icon on your desktop.

- Drag your BIOS flasher utility, game, or other program onto the “FREEDOS” icon to copy it onto the USB flash drive.

- When finished, drag the “FREEDOS” drive icon onto the trashcan to unmount it.

- You’re finished. Use your USB flash drive to update your computer’s BIOS, play old DOS games, or do whatever else you had in mind.

- Keep the “freedos.img” file around. If you ever need it again, start over from the “Prepare your USB flash drive” section which is entirely self-contained. That is, it doesn’t require any software that doesn’t come pre-installed on a Mac, so even if you’ve uninstalled VirtualBox you can still re-use your handy drive image.

- Create a new save file and write the information to it.

- Delete the old file.

I think I’m going to upgrade my personal MacBook Air to Sequoia tonight. YOLO!

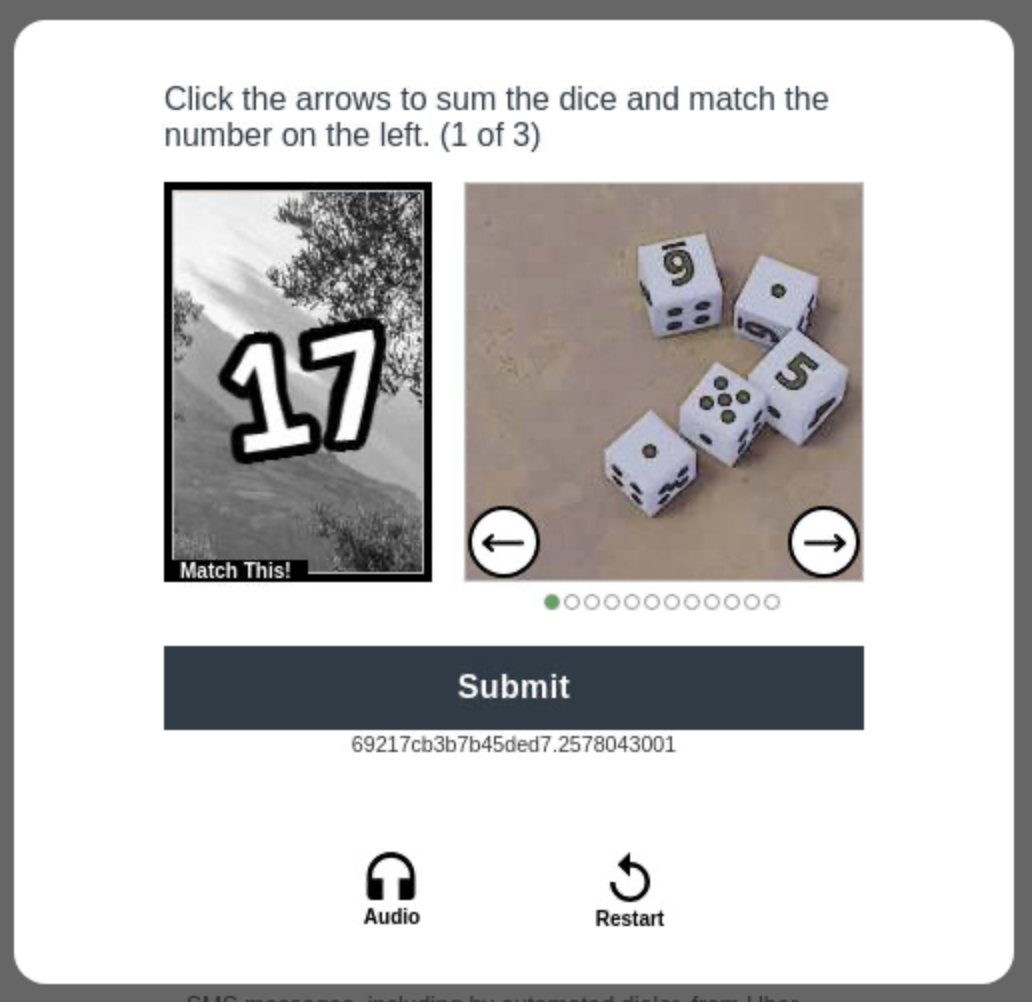

What sadist invented this captcha?

Veilid in The Washington Post

I’ve been helping on a fun project with some incredibly brilliant friends. I found myself talking about it to a reporter at The Washington Post. The story just came out. My part was crucial, insightful, and far, far down the page:

Once known for distributing hacking tools and shaming software companies into improving their security, a famed group of technology activists is now working to develop a system that will allow the creation of messaging and social networking apps that won’t keep hold of users’ personal data. […] “It’s a new way of combining [technologies] to work together,” said Strauser, who is the lead security architect at a digital health company.

You bet I’m letting this go to my head.

At work: “Kirk, I think you’re wrong.” “Well, one of us was featured in WaPo, so we’ll just admit that I’m the expert here.”

At home: “Honey, can you take the trash out?” “People in The Washington Post can’t be expected to just…” “Take this out, ‘please’.”

But really, Veilid is incredibly neat and I’m awed by the people I’ve been lucky to work with. Check it out after the launch next week at DEF CON 31.

Easily copy file contents with ForkLift

I use the ForkLift 3 file manager on my Mac. Part of my job involves copying-and-pasting the contents of various files into web forms. I made a trivial little shell script so ForkLift can help me:

#!/bin/sh

if [ ${#@} -ne 1 ]; then

echo "Expected exactly 1 filename."

exit -1

fi

pbcopy < $1

Then I created a new “Tool” called “Contents to Clipboard” that calls the script with the name of the selected file.

/Users/me/bin/copy_contents.sh $SOURCE_SELECTION_PATHS

Now I can select a file, select the Commands > Contents to Clipboard menu, and voila! The file’s contents are ready to be pasted into another app.

Jira is a code smell

Good Project Managers are important. As an Application Programmer, they’re your Interface to the rest of the organization. They’re your API. Believe me, you want that interface between you and their managers, unless you like giving status updates and making projection reports to pass around.

In fairness to PMs, we engineers don’t make it easy for them to do their jobs. Does this sound familiar?

PM: How long do you think that’ll take?

Engineer: Oh, I don’t know.

PM: Can you give me an estimate?

Engineer: Uh, a while.

PM: OK. I’ll write “a while” on my report. I’m sure the CEO will love that.

Engineer: Fine. 2 months.

PM: Thanks!

Now, think of all the task management applications you’ve been asked to use. Each claims to be useful for engineers (“put all your requirements and future work in one place!”), but they’re also marketed to PMs (“be able to tell your manager how long the engineers are going to take!”).

Jira is one of these. It’s awful. It’s a code smell, and if you’re interviewing at a company that says “we track everything in Jira!”, ask a lot of follow-up questions to figure out if you genuinely want to work there.

Jira itself is… fine. In isolation, it’s neither wonderful nor terrible. It just is, like a rock, tree, or slime mold. Its main feature — and what makes it potentially evil — is its immense configurability that makes it irresistible to the wrong kinds of Project Managers. A good PM will see it, tweak a couple of knobs, and start putting stories in it for the engineers to hack on. A bad PM will see it, embrace every configuration option available, and saddle Engineering with a 23 step status workflow where each story goes from “Inkling” to “Idea” to “Rough Draft” to “Planning Review Meeting 3” to “Code Complete” to “Ready for Testing” to “Testing” to “Tested” and eventually on to “Done”, “Shipped”, “Finished”, “Announced”, “Demoed”, and then “T-Shirts”. The bad PM will love this because they can give reports like “this sprint is 43.8% done and we’re 97.53% likely to hit our target”, and the engineers will hate this because they’ll spend as much time updating story statuses as they do working. A good PM won’t care about all that and will prefer to use something simpler.

A useful task manager will be somewhat opinionated. It will almost, but not quite, do what everyone wants, and will annoy everyone equally with the few things they think it does wrong. A tar pit of a task manager will claim to be everything to everyone after customization, meaning that a few people will think it’s heavenly and everyone else will despise it with the heat of a thousand suns.

Jira is one of the bad ones. If you run across someone who adores it, tiptoe away quietly and quickly.

The Itanic Has Sunk

By today, July 29, 2021, Intel has shipped the last of its Itanium processors, the last holdout of a rough decade of their history. You’d be forgiven for not having heard of this unusual CPU as they carved a niche of a few supercomputers in the early 2000s and some legacy mainframe holdouts.

In 1994, Intel and HP looked around and saw a wide variety of successful server CPU architectures like Alpha, MIPS, SPARC, and POWER. This annoyed them and they decided to make a new CPU that no one would want to use. To these ends they invented an instruction set architecture that was impossible to program efficiently, planning that future compilers would be clever enough to make software run acceptably well. (This never happened because it turned out that anyone smart enough to write these compilers would rather be doing almost anything else.)

In 2000, Intel launched the NetBurst Pentium 4 CPU. It had serious design compromises that would hypothetically allow CPUs to run at upwards of 10GHz. Since these beasts could fry an egg at 3GHz, it was good that they never came anywhere near 10GHz as the heat would likely be sufficient to induce nearby hydrogen atoms to fuse.

Customers begged Intel to release a 64-bit Pentium-compatible CPU. They refused because they knew this would canibalize Itanium. Why write software for a weird and uncommon architecture if you could use something like the terrible x86 instruction set you already knew, but better?

In 2003, AMD launched their 64-bit, but Pentium-compatible, Opteron CPU. Everyone stopped buying Intel CPUs for a while. Within a few years Intel made their own 64-bit, but AMD-compatible, CPUs to avoid entirely losing the desktop and small server market. They were right earlier: almost everyone immediately embraced AMD’s instruction set and no one but HP wanted anything to do with Itanium.

And then, for a long time, nothing much happened. That’s happy news when you’re talking about earthquakes or tornados, but not so hot when you’re talking about sales of processors you spent a few billion dollars developing.

In 2015, HP admitted defeat and launched a line of mainframes using AMD’s 64-bit instruction set so developers could write and test software on systems that cost both over and under a million dollars.

Intel was contractually obligated to keep Itanium limping along but it was apparent their heart wasn’t in it. In 2019 they accepted the inevitable and announced that Itanium would be officially dead as of today. The final batch of CPUs was built on a 32nm process when everyone else was on to 10nm, 7nm, and 5nm designs.

Goodbye, Itanic. You were a strange, unloved little detour, better known for the good designs you killed than for any successes of your own. Few will miss you.

Ironically, in 2020 Apple launched their own desktop-class CPU that wasn’t compatible with more common Intel or AMD designs. The difference was that Apple’s M1 was actually nice and fast, both for developers and end users.

Opt-Out Tracking is an Awful Idea

Someone invented a new standardized way to opt out of telemetry for command line applications. This is a horrid idea.

The existence of the setting establishes “tracking is OK!” as the default, and makes opting out the responsibility of the end user. With this in place, if a company collects the names of all the files in my home directory, it’s my fault for not tweaking some random setting correctly. (For technical types: don’t forget to set the “don’t track me!” variable in your crontabs, or else they’ll run with tracking enabled! Be sure to add it to your sudoers file, or now root commands spy on you!)

If this should exist at all, it should be in the form of a “go ahead and spy on me!” whitelist, with all telemetry and other spyware disabled unless explicitly enabled. Then it becomes the responsibility of each application’s author to encourage their users to enable it. Or better, get over the bizarre and radical notion of enabling spyware in command line utilities.

Smart progress bars

Progress bars suck at predicting how long things will take. I’ll tell you what I want (what I really really want): a system-wide resource that receives a description of what the progress bar will be measuring and uses it to make an informed estimate the entire process’s duration. For example, suppose that an application installer will do several things in series, one after another. Perhaps an explanation of that process could be written in a machine-readable format like this:

vendor: Foo Corp

name: My Cool App installer

stages:

- Downloading files:

- resource: internet

size: 1000 # Number of MB to download

- Extracting files:

- resource: disk_read

size: 1000 # Size of the downloaded archive file, in MB

- resource: disk_write

size: 2000 # Size of the extracted archive file, in MB

- Copying files into place:

- resource: disk_read

size: 2000 # Now we read the extracted files...

- resource: disk_write

size: 2000 # and copy them elsewhere.

- Configuring:

- resource: cpu

size: 100 # Expected CPU time in some standard-ish unit

Because I’ve used the progress bar resource before, it knows about how long each of those things might take:

Ta-da! The whole installation should run about 44 seconds. When the installer runs, instead of updating the progress bar manually like

update_progress_bar(percent=23)

it would tell the resource how far it had gotten in its work with a series of updates like

update_progress_bar('Downloading files', internet=283)

...

update_progress_bar('Copying files into place', disk_read=500)

update_progress_bar('Copying files into place', disk_write=500)

...

update_progress_bar('Configuring', cpu=30)

The app itself would not be responsible for knowing how what percent along it is. How could it? It knows nothing about my system! Furthermore, statistical modeling could lead to more accurate predictions with observations like “Foo Corp always underestimates how many CPU units something will take compared to every other vendor so add 42% to their CPU numbers” or “Bar, Inc.’s website downloads are always slow, so cap the Internet speed at 7MB/s for them.” Hardware vendors could ship preconfigured numbers for new systems based on their disk and CPU speeds where the system can make decent estimates right out of the box. But once a new system is deployed, it gathers observations about its real performance to make better predictions that evolve as it’s used.

We should be able to do a much better job at better job of guessing how long it’s going to take to install an app. This solution needs to exist.

New favorite command: Zoxide

My favorite new command is zoxide. It’s like a faster z, autojump, or fasd.

In summary, it learns which directories you visit often with your shell’s cd command, then lets you jump to them based on pattern matching. In the event of a tie it picks the one you’ve used most frequently and recently. For instance, if I type z do then it executes cd "~/Library/Application Support/MultiDoge" for me because that’s the best match for “do” in recent history. An optional integration with fzf lets you interactively search your directory history before jumping to one.

It’s lightning fast and integrates perfectly with common shells (even Fish which is my favorite).

I didn’t even know I’d been missing a tool like this.

Use local Git repos for personal work

I’ve heard a lot of online arguments about whether you should host your Git-based projects in GitHub or GitLab, but a lot of them miss an obvious option. Is this repo for your own personal work that you don’t intend to share with others? Great! You can host unlimited, free, completely private repositories on your own system. Here’s the complete process:

$ mkdir -p ~/src/myproject

$ cd ~/src/myproject

$ git init --bare

$ cd ~

$ git clone ~/src/myproject

$ cd myproject

There, you’re done. Now you have a 100% fully functional Git repo that doesn’t require a network connection and supports every single Git feature. Pull it, push it, branch it, revert it, whatever: it’s your own repo and you can do whatever you want with it. And you don’t have to sign up for anything, or agree to a Terms of Service, or share your work, or trust a company you don’t know very well.

If you want to move your repo to another server later, you can copy ~/src/myproject to its new home via whatever means you find most convenient, use git remote set-url origin [...] to point your existing work toward the new location, and then go on about your business as usual without changing any of your workflow.

GitHub and GitLab have a lot of nice features that may be totally irrelevant if you’re not collaborating with a team. Never forget that you can host Git projects yourself, easily and for free.

Oh, and if you do find yourself needing to work with a handful of people and don’t need all of the integration features of the commercial options, I highly recommend Gitea. It’s a tiny little service you can host yourself and it takes very few resources. I use it whenever I need my Git repo to be accessible across the Internet.

On Generated Versus Random Passwords

I was reading a story about a hacked password database and saw this comment where the poster wanted to make a little program to generate non-random passwords for every site he visits:

I was thinking of something simpler such as “echo MyPassword69! slashdot.org|md5sum” and then “aaa53a64cbb02f01d79e6aa05f0027ba” using that as my password since many sites will take 32-character long passwords or they will truncate for you. More generalized than PasswordMaker and easier to access but no alpha-num+symbol translation and only (32) 0-9af characters but that should be random enough, or you can do sha1sum instead for a little longer hash string.

I posted a reply but I wanted to repeat it here for the sake of my friends who don’t read Slashdot. If you’ve ever cooked up your own scheme for coming up with passwords or if you’ve used the PasswordMaker system (or ones like it), you need to read this:

DO NOT DO THIS. I don’t mean this disrespectfully, but you don’t know what you’re doing. That’s OK! People not named Bruce generally suck at secure algorithms. Crypto is hard and has unexpected implications until you’re much more knowledgeable on the subject than you (or I) currently are. For example, suppose that hypothetical site helpfully truncates your password to 8 chars. By storing only 8 hex digits, you’ve reduced your password’s keyspace to just 32 bits. If you used an algorithm with base64 encoding instead, you’d get the same complexity in only 5.3 chars.

Despite what you claim, you’re really much better off using a secure storage app that creates truly random passwords for you and stores them in a securely encrypted file. In another post here I mention that I use 1Password, but really any reputable app will get you the same protections. Your algorithm is a “security by obscurity” system; if someone knows your algorithm, gaining your master password gives them full access to every account you have. Contrast with a password locker where you can change your master password before the attacker gets access to the secret store (which they may never be able to do if you’ve kept it secure!), and in the worst case scenario provides you with a list of accounts you need to change.

I haven’t used PasswordMaker but I’d apply the same criticisms to them. If an attacker knows that you use PasswordMaker, they can narrow down the search space based on the very few things you can vary:

My comments about the modifier being part of your password? Basically you’re concatenating those strings together to create a longer password in some manner. There’s not really a difference, and that’s assuming you actually use the modifier.

So, back to our attack scenario where a hacker has your master password, username, and a URL they want to visit: disregarding the prefix and suffix options, they have 6 * 13 * 13 * 28 = 28,392 possible output passwords to test. That should keep them busy for at least a minute or two. And once they’ve guessed your combination, they can probably use the same settings on every other website you visit. Oh, and when you’ve found out that your password is compromised? Hope you remember every website you’ve ever used PasswordMaker on!

Finally, if you’ve ever used the online version of PasswordMaker, even once, then you have to assume that your password is compromised. If their site has ever been compromised — and it’s hosted on a content delivery network with a lot of other websites — the attacker could easily have placed a script on the page to submit everything you type into the password generation form to a server in a distant country. Security demands that you have to assume this has happened.

Seriously, please don’t do this stuff. I’d much rather see you using pwgen to create truly random passwords and then using something like GnuPG to store them all in a strongly-encrypted file.

The summary version is this: use a password manager like 1Password to use a different hard-to-guess password on every website you visit. Don’t use some invented system to come up with passwords on your own because there’s a very poor chance that we mere mortals will get it right.

Making DOS USB Images On A Mac

I needed to run a BIOS flash utility that was only available for DOS. To complicate matters, the server I needed to run it on doesn’t have a floppy or CD-ROM drive. I figured I’d hop on the Internet and download a bootable USB flash drive image. Right? Wrong.

I found a lot of instructions for how to make such an image if you already have a running Windows or Linux desktop, but they weren’t very helpful for me and my Mac. After some trial and error, I managed to create my own homemade bootable USB flash drive image. It’s available at http://www.mediafire.com/?aoa8u1k1fedf4yq" if you just want a premade ready-to-download file.

If you want a custom version, or you don’t trust the one I’ve made — and who’d blame you? I’m some random stranger on the Internet! — here’s how you can make your own bootable image under OS X:

Relax!

There are a lot of steps, but they’re easy! I wanted to err on the side of being more detailed than necessary, rather than skipping “obvious” steps that might not be quite so easy for people who haven’t done this before.

Download VirtualBox and install it

Download FreeDOS and create a virtual machine for it

Install FreeDOS

Convert the VirtualBox disk image into a “raw” image

/Applications/VirtualBox.app/Contents/Resources/VirtualBoxVM.app/Contents/MacOS/VBoxManage internalcommands converttoraw ~/"VirtualBox VMs/FreeDOS/FreeDOS.vdi" ~/Desktop/freedos.img

This will turn your VirtualBox disk image file into a “raw” image file on your desktop named “freedos.img”. It won’t alter your original disk image in any way, so if you accidentally delete or badly damage your “raw” image, you can re-run this command to get a fresh, new one.

Prepare your USB flash drive

Copy your drive image onto the USB flash drive

Add your own apps to the image

Done.

In Defense Of The Model M

There are few joys in life like using something that is the perfect expression of its intent. Each trade has its representative tools, and their common trait is quality, even if it’s not obvious to the casual observer, and often counterintuitive. The best tools in a category are almost always the least flashy, and rarely the ones a new practitioner would choose.

The Model M keyboard is like that: it’s loud, ugly, heavy, and utterly lacking modern niceties like buttons to change your sound volume or check your email. And yet, it has that transcendent feeling that’s hard to explain, that sense of rightness where you realize that you’re using the best that’s ever been made, that every change since then has been superfluous and cosmetic. With time, the loud clacking becomes the background music of your work, the harmony that tells you that your thoughts have become words. Its beige boxiness yields to elegant simplicity and the realization that true beauty is born of function, not appearance. The sheer weight of the thing turns to solidity and the confidence that it will stay where you put it. The dearth of features becomes the singleminded dedication to the parts that really matter and a proud disregard of unneeded distractions.

A tool attains its peak when a craftsman forgets that he’s using it because it has become an extension of himself. Thus the humble Model M has become the iconic favorite of hackers everywhere, an ode to the engineers who grasped for excellence and acheived it.

Fun With Software Licenses

Did you know that you’re probably not allowed to make backups of your computer? It’s true, if you believe in the legal fiction known as “End User License Agreements” (or EULAs), which are those annoyingly long contracts where you have to click “I Agree” before you’re allowed to install some program or another.

For example, here’s a snippet of the Adobe Integrated Runtime (AIR) End User License Agreement:

2.3 Backup Copy. You may make one backup copy of the Software, provided your backup copy is not installed or used on any computer.

Nice, huh? If you install this software, its EULA forbids you from making more than one backup copy. This is a deal-breaker for business which keep multiple backup archives from days, weeks, and months past. According to this agreement, you could hypothetically alter your corporate storage system to ignore each of the files that would be installed, but realistically no one would ever even attempt this.

This is just one more reason to be grateful that EULAs are almost universally believed to be legally unenforcable. However, unless you’re willing to tell a jury that you don’t think you’re bound by such an agreement, remember that every piece of software with a similar license is a potential time bomb. Theoretically, you could be sued just for having it, even if you probably wouldn’t be found liable.

Fans of commercial software often talk about the “impracticality” of Free or Open Source Software, but the alternatives are starting to look a lot worse.

Don't Bump That Flash Drive

From the manual of an Asus Eee PC:

The solid-state disk drive’s head retracts when the power is turned OFF to prevent scratching of the solid-state disk drive surface during transport.

I think someone got a little zealous with the find-and-replace.

Buffer Overrun In Antitrust

Skip this unless you’re really, really geeky.

Still with us? OK. In the movie “Antitrust”, there’s a screenshot of some code that has a possible Denial Of Service vulnerability:

/* are we doing a GET or just a HEAD */

boolean doingGet;

/* beginning of file name */

int index;

if (buf[0] == (byte)'G' &&

buf[1] == (byte)'E' &&

buf[2] == (byte)'T' &&

buf[3] == (byte)' ') {

doingGet = true;

index = 4;

} else if (buf[0] == (byte)'H' &&

buf[1] == (byte)'E' &&

buf[2] == (byte)'A' &&

buf[3] == (byte)'D' &&

buf[4] == (byte)' ') {

doingGet = false;

index = 5;

} else {

/* we don't support this method */

ps.print("HTTP/1.0 " + HTTP_BAD_METHOD +

" unsupported method type: ");

ps.write(buf, 0, 5);

ps.write(EOL);

ps.flush();

s.close();

return;

}

Because I can’t resist such things, I paused the movie to read over the code. Now, I’m assuming this is Java instead of C++ because “boolean” wasn’t spelled “bool”, although I’m not sure why they’d be using Java for performance critical code. Anyway. See the ps.write(buf,0,5); line near the end? Well, “buf” is presumably the string that the client sent to the server. If the client is broken (or malicious) enough to misspell “GET” and “HEAD”, then the server politely tries to tell the client what it did wrong by sending “buf”’s value back.

Which brings us to the hack. If “buf” is less than five characters long, then that “ps.write” line will attempt to read past the end of “buf”. If the calling function doesn’t handle index error exceptions, boom! The service crashes: Denial Of Service. Note that this is still better than the C++ equivalent, which would write the contents of memory immediately following the end of “buf” back to the client.

No, I’m not exactly good at sitting back and watching movies.

How Not To Save A Game

I was about halfway through a game called “Final Fantasy XII: Revenant Wings” on my Nintendo DS. I was having a great time and loving it until a stupid bug wiped out all the work I’d put in and made me start over.

When I was in the middle of a particularly involved battle, the red “low battery” warning light came on, so as soon as I finished I tried to save my game. Big mistake. The DS used up its remaining power during that instant and turned itself off. When I plugged it into the charger and turned it back on, I got a message saying that my game file was corrupt and had been deleted.

OK, in retrospect, I should have plugged my DS into the charger before I tried to save my game. Still, it should be impossible to destroy your old information by writing a new version of it. That’s just good design. Unfortunately, FFXII doesn’t have a good design. See, the problem is that FFXII saves its game by writing over the pre-existing save file. Since the power died during that write, the results were half old game and half new game. Hence corrupt. Hence deleted. Here’s how a competent programmer would handle the same situation:

See the difference? At no point do the two files get mingled together, and the old file stays valid and ready to use until the new one is completely written. In the absolute worst case of a power failure during the saving process, you’d lose the new information but the old data would still be intact and safe.

I don’t know whether the buggy code was written by Square Enix, or if they were using Nintendo’s built-in game saving method. Regardless, it’s dumb and should be fixed ASAP for all new games.

SCOX Is Deficient And Bankrupt

Right now, as I type this, the Yahoo! Finance page for SCO has a caution sign and the text: “SCOX is deficient and bankrupt.” We’ve all been thinking it for ages, but this is the first time I’ve seen an “official” source say so. Wonder what that’s all about?